Micron’s 2TB RAM Push: The Quiet Upheaval in AI Server Costs

Micron’s 2TB RAM Push: The Quiet Upheaval in AI Server Costs📷 Source: Web

- ★256GB LPDDR5X packages now sampling

- ★Datacenters gain 2TB RAM per CPU

- ★Memory costs drop, but compatibility lags

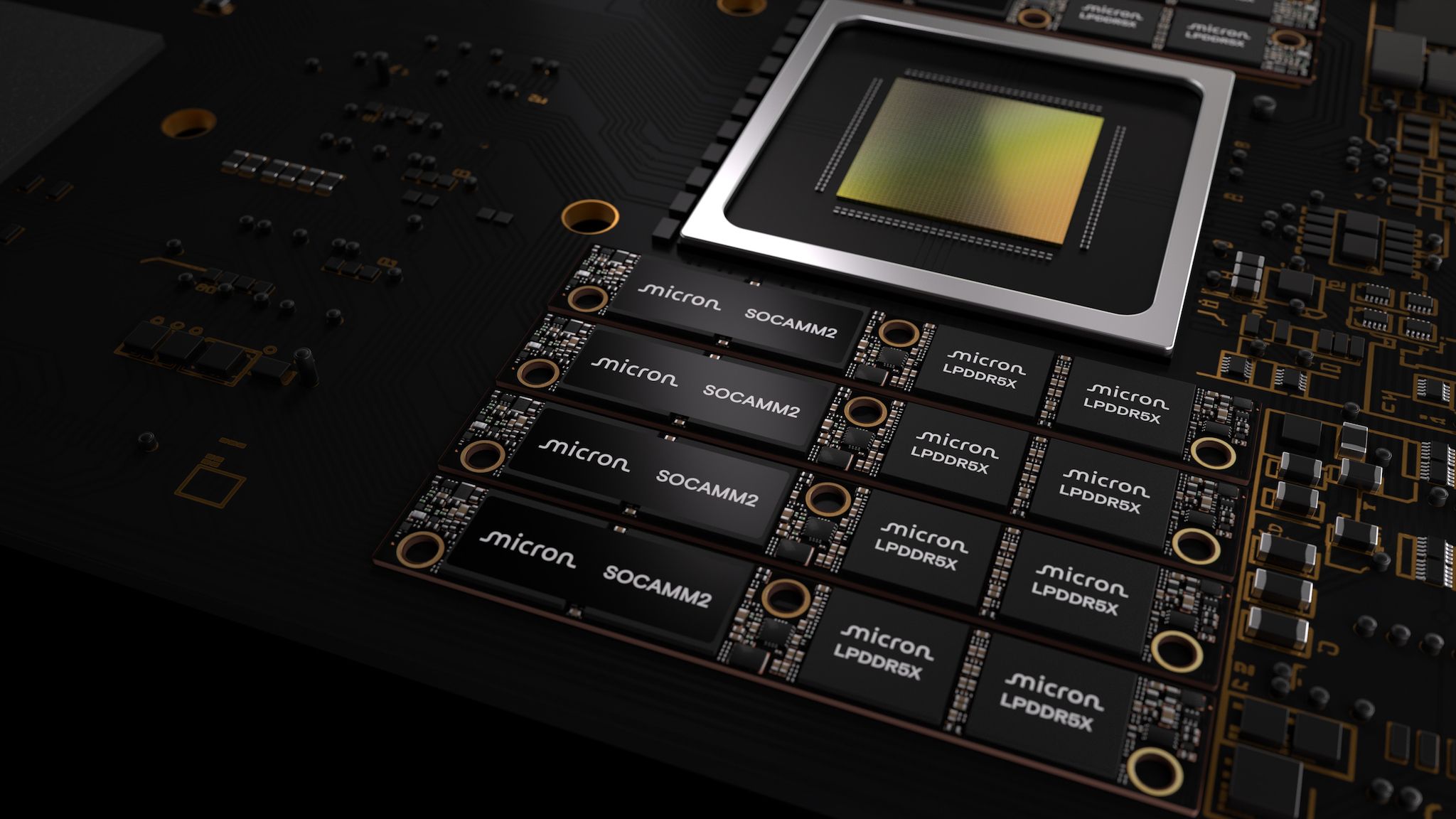

Micron has begun sampling 256GB SOCAMM2 memory packages, a move that suddenly puts 2TB of LPDDR5X RAM within reach of a single CPU in AI servers. Tom’s Hardware reports that the first customers are already testing these units, which promise to slash the cost-per-gigabyte of high-capacity memory by up to 30% compared to traditional DDR5 modules. For datacenter operators, this isn’t just an incremental upgrade—it’s a potential rewrite of the economics of AI training and inference workloads.

But the headline number obscures a critical detail: compatibility. While LPDDR5X offers power efficiency and density advantages, it lacks the broad industry support of DDR5. Server motherboards, chipsets, and even some AI frameworks are optimized for DDR5’s signaling and latency characteristics. Early adopters may face a trade-off between raw capacity and software stability, with some workloads struggling to fully utilize the new memory without firmware or driver updates.

The immediate impact is clearest for hyperscale players like Google, Meta, and Microsoft, which can absorb the engineering overhead to squeeze out cost savings. For smaller datacenter operators, the calculus is less straightforward. The upfront cost of retooling infrastructure—even with cheaper memory—could offset near-term gains, creating a temporary divide between haves and have-nots in the AI server market.

Micron’s 2TB RAM Push: The Quiet Upheaval in AI Server Costs📷 Source: Web

The real-world gap that specs don’t show

Beyond cost, the 2TB-per-CPU milestone could reshape AI development workflows. Training models that previously required distributed multi-node setups might now fit into a single server, reducing network overhead and simplifying debugging. Micron’s own benchmarks suggest a 15–20% reduction in training time for certain language models, a non-trivial boost for teams iterating on large datasets. Yet, the benefit isn’t universal. Workloads with heavy inter-node communication—like recommendation systems or graph neural networks—may see diminishing returns, as the memory bandwidth per socket remains constrained by LPDDR5X’s lower pin count.

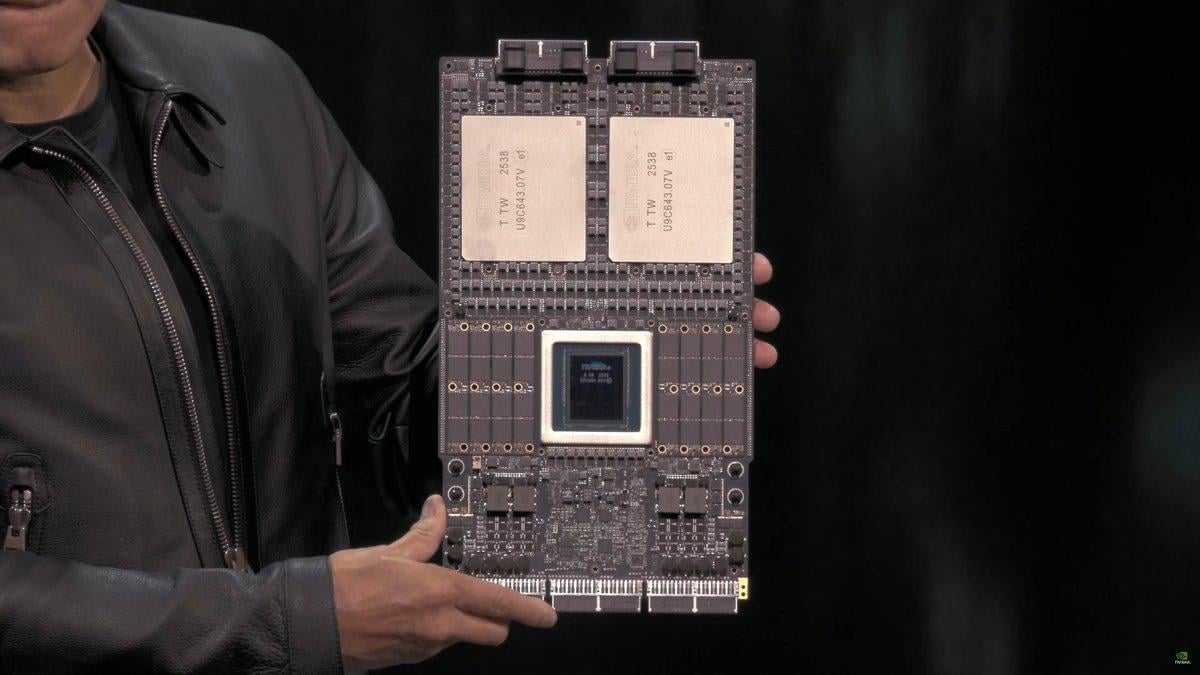

Ecosystem effects are already emerging. AMD and Intel are racing to optimize their server CPUs for LPDDR5X, with AMD’s EPYC Bergamo and Intel’s Sapphire Rapids refresh expected to include better support in late 2024. Meanwhile, NVIDIA’s H100 and upcoming B100 GPUs are being retooled to better interface with the new memory standard, though full compatibility may take another 12–18 months. The delay creates a chicken-and-egg problem: without widespread GPU and CPU support, adoption will stall; but without adoption, hardware vendors have little incentive to prioritize updates.

Then there’s the secondary impact on the broader memory market. Samsung and SK Hynix, Micron’s primary competitors, are under pressure to accelerate their own high-capacity LPDDR5X initiatives. Analysts expect a price war by 2025, particularly in the 128GB+ segment, which could further compress margins. For datacenter operators, this is good news—until it isn’t. Aggressive pricing might push smaller memory suppliers out of the market, reducing long-term supply chain diversity and increasing reliance on a handful of dominant players.