AI Systems Fail Silently📷 Published: Apr 11, 2026 at 12:12 UTC

- ★Distributed AI failures

- ★Lack of crash warnings

- ★Subtle performance decline

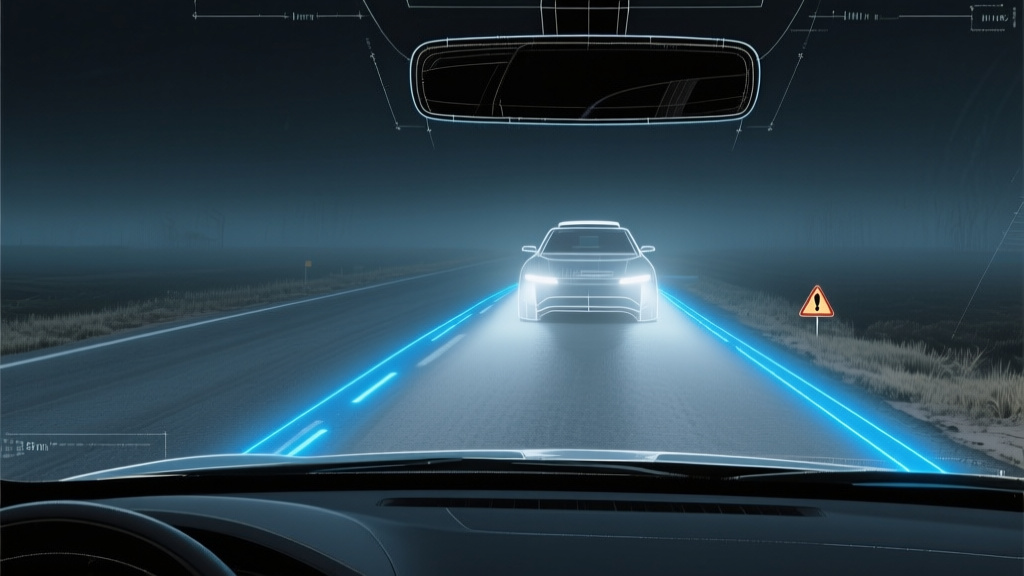

Distributed AI systems in late testing phases are showing a disturbing pattern: all seems well on the dashboards, but users notice that decisions are becoming increasingly unreliable. This isn't due to classic errors like service drops or crashes, but rather a quiet drift from expected behavior. According to IEEE Spectrum, this phenomenon is causing concern among engineers. Decades of experience have not prepared them for this type of failure, where systems slowly degrade without warning.

The problem lies not in overt mistakes but in the subtle, unannounced deviation from anticipated performance. It's akin to a ship slowly veering off course without alerting anyone on board. This silent failure mode poses significant challenges, as identified by experts in the field, highlighting the need for more nuanced testing and evaluation methodologies.

The gap between benchmark and product📷 Published: Apr 11, 2026 at 12:12 UTC

The gap between benchmark and product

The implications of these silent failures are far-reaching, affecting not just the efficiency of AI systems but also the trust users place in them. As researchers note, the lack of overt symptoms makes it difficult to diagnose and correct issues before they lead to significant problems. This underscores the importance of developing more sophisticated monitoring and diagnostic tools that can detect subtle performance declines. Furthermore, industry leaders are emphasizing the need for transparency and accountability in AI development to mitigate these risks.

The silent failure of AI systems also raises questions about the current state of AI development and deployment. With companies like Google and Microsoft investing heavily in AI, the pressure to deliver functional and reliable systems is mounting. However, as critics argue, the rush to market might overlook critical aspects of system reliability and safety.