Geekbench 6.7 Challenges Intel BOT Performance Scores

📷 Published: Apr 24, 2026 at 14:19 UTC

- ★Geekbench 6.7 invalidates BOT-enabled runs

- ★Reported BOT boosts can reach 40 percent

- ★Benchmark validity shifts toward optimization transparency

Geekbench 6.7 has added a direct check for Intel’s Binary Optimization Technology, and that turns a leaderboard trick into a transparency problem.

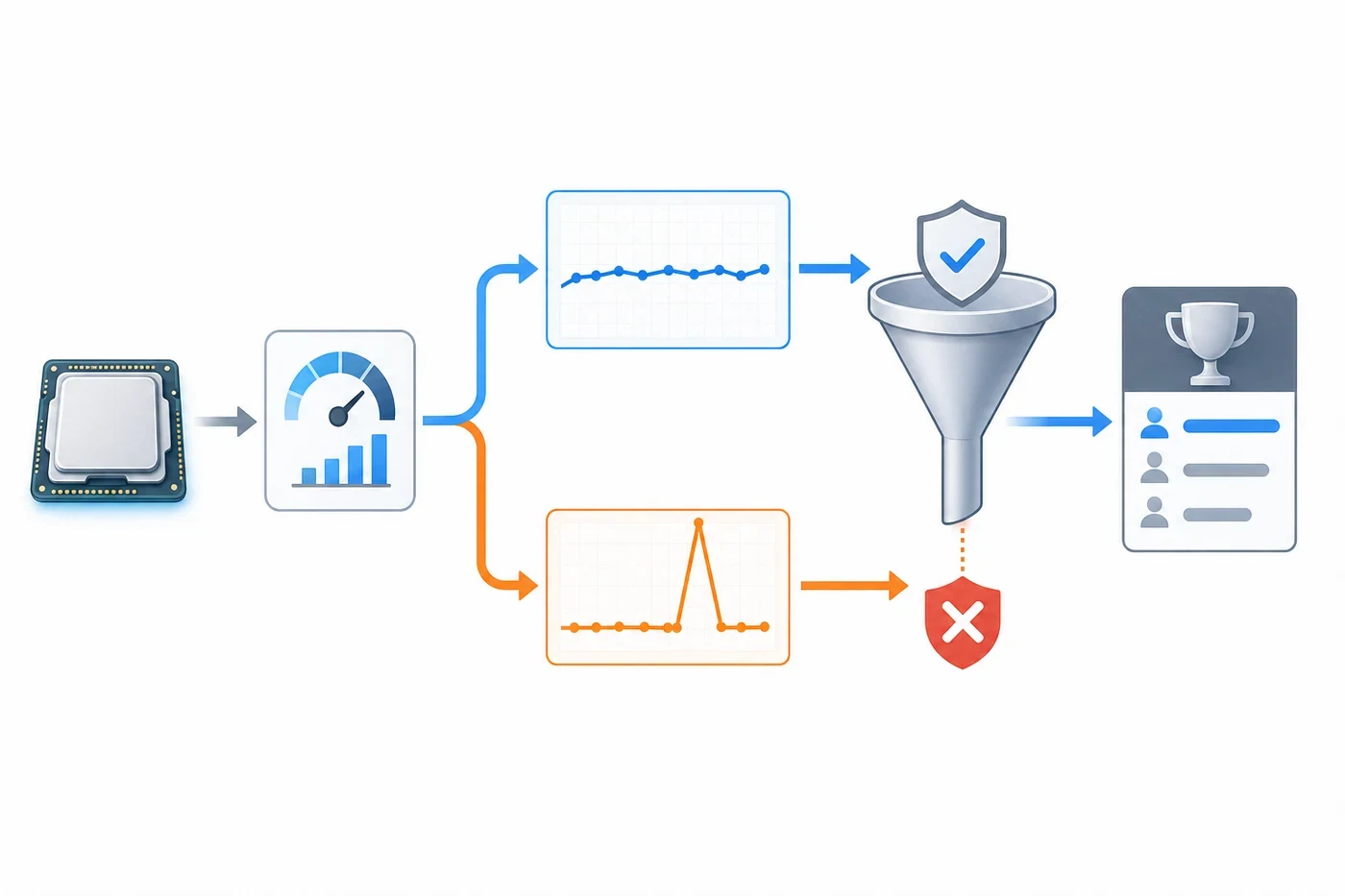

Synthetic benchmarks work because everyone agrees to pretend the test is a neutral scoreboard. That agreement gets awkward when a processor can recognize the workload and optimize specifically for the benchmark rather than for the messy spread of software people actually run. The latest update to Geekbench now flags runs using Intel BOT as invalid, according to Tom’s Hardware. The point is not subtle: a score boosted by benchmark-aware optimization should not sit beside ordinary results as if both numbers were measuring the same thing.

The reported scale of the boost is the uncomfortable part. Available reporting says BOT can raise some Geekbench results by as much as 40 percent, depending on the workload and configuration. That does not mean every Intel system suddenly becomes 40 percent slower in real use. It means the benchmark can be pushed into measuring a curated performance peak rather than a general-purpose performance profile. For buyers, reviewers and IT teams, that difference matters because benchmark tables often compress complex hardware decisions into one very tidy number. Tidy numbers are useful. They are also dangerously easy to polish.

📷 Published: Apr 24, 2026 at 14:19 UTC

Synthetic spikes versus real performance

Geekbench’s move is best read as a boundary-setting decision, not an anti-Intel stunt. Benchmark tools have always had to fight the same problem: vendors want scores that flatter their hardware, users want rankings that feel objective, and reviewers need tests that survive contact with real workloads. If a CPU optimization is broadly available to normal applications, it belongs in the performance conversation. If it is tuned around the benchmark itself, the conversation becomes much thinner.

This is why the invalidation badge matters. It does not erase BOT-enabled runs from existence, but it changes their status. They become evidence of what a system can do under a specific optimization path, not a clean comparison point against other architectures. That distinction is precisely the kind of boring metadata that prevents a benchmark chart from becoming performance theater.

The community reaction is predictably split. Some users who treated BOT-enabled scores as legitimate leaderboard achievements will see the change as a penalty. Others will read it as overdue hygiene in a market where CPU performance claims already require footnotes, test conditions and a modest tolerance for vendor optimism. The sharper point is that Geekbench is asserting control over what counts as comparable. In benchmark culture, that is power.

The next question is whether other testing suites follow. Detection systems create their own cat-and-mouse dynamic: the benchmark identifies optimization behavior, vendors adapt, the rules tighten, and the spreadsheet keeps pretending it is neutral. Still, this update is a useful correction. It reminds the market that benchmark validity is not just about high numbers; it is about whether those numbers describe something a user can actually reproduce.

For all the noise around one Intel feature, the real signal is simpler: benchmarking is becoming less about raw score worship and more about provenance. Who produced the number, under what conditions, with which optimizations enabled — that is now part of the result. The leaderboard survived. The illusion got less comfortable.