GO-2: AGIBOT’s embodied AI takes a step—into what?

GO-2: AGIBOT’s embodied AI takes a step—into what?📷 Published: Apr 9, 2026 at 18:08 UTC

- ★Foundation model for real-world robotics

- ★Claims of reliable execution remain unbenchmarked

- ★Industrial logistics likely first target

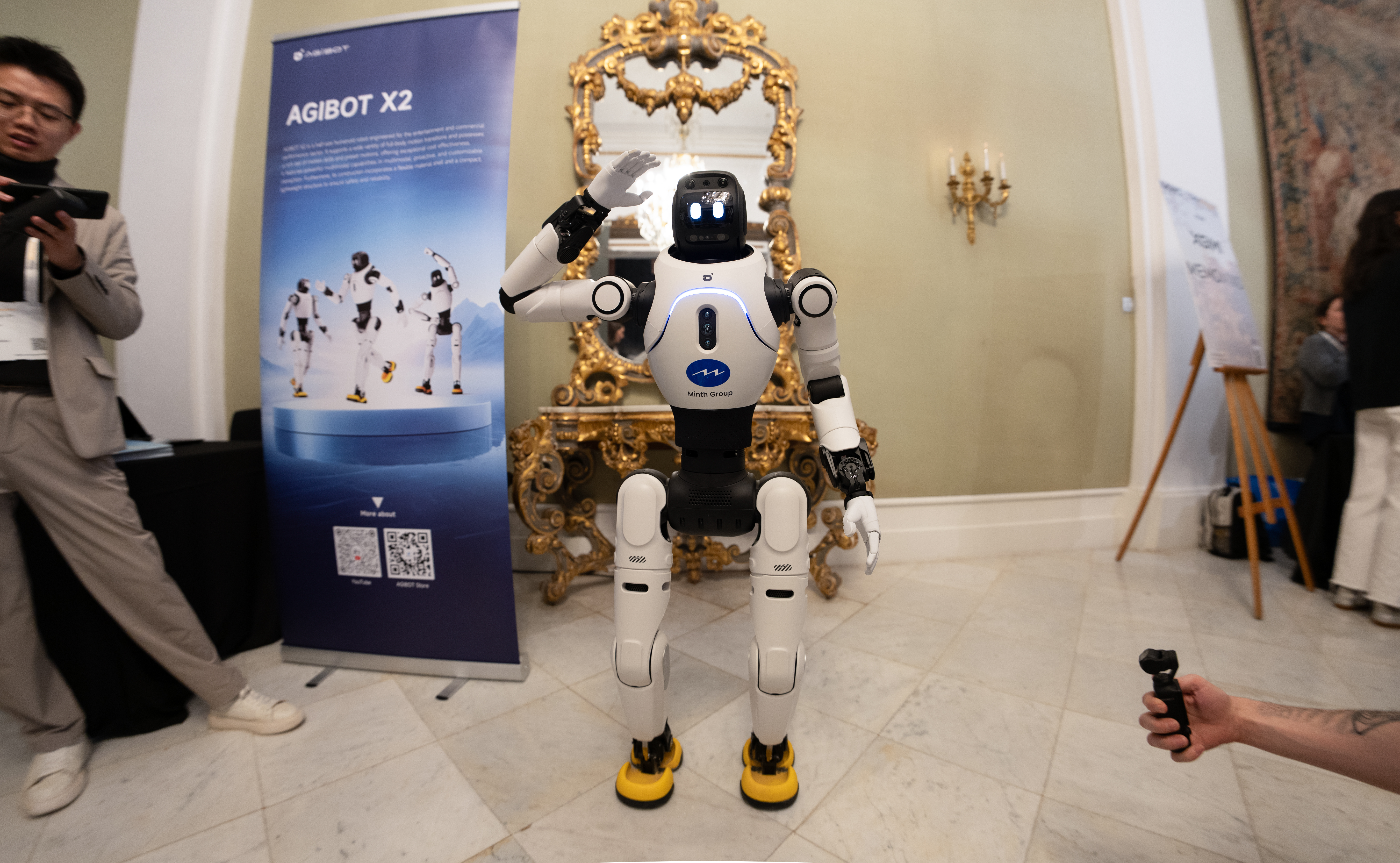

AGIBOT has released GO-2, its latest foundation model for embodied AI, promising robots that can "plan correctly and execute reliably in real-world environments." The announcement, published by The Robot Report, positions GO-2 as a leap forward in a segment where most robots still stumble over dynamic obstacles or unexpected edge cases. If the claims hold, this could be a rare instance where a foundation model translates directly into industrial utility—something even NVIDIA’s Isaac Lab and Tesla’s Optimus have struggled to demonstrate at scale.

Yet the press release offers no benchmarks, no comparative performance data, and no real-world deployment case studies. Instead, we get a familiar narrative: a model trained on vast datasets, now supposedly capable of handling the messiness of physical environments. That’s a high bar, especially when even basic tasks like navigating a cluttered warehouse remain error-prone for most robots. The term "foundation model" suggests GO-2 may be pre-trained on large language or vision-language models, but AGIBOT doesn’t specify how much of that training translates to actual robotic control.

The name GO-2 implies a successor to an earlier model (GO-1, presumably), but AGIBOT hasn’t provided details on what, if anything, has fundamentally changed. Is this a refinement of existing capabilities, or a genuine architectural shift? Without transparency, it’s hard to separate meaningful progress from repackaged marketing.

The demo is impressive; the deployment reality is anyone’s guess📷 Published: Apr 9, 2026 at 18:08 UTC

The demo is impressive; the deployment reality is anyone’s guess

Industrial logistics—warehouses, fulfillment centers, and automated manufacturing—are the most likely near-term beneficiaries. These environments are structured enough to limit edge cases but complex enough to expose weaknesses in current robotic systems. AGIBOT’s focus on "reliable execution" suggests a model optimized for high-frequency, low-variance tasks: think pallet stacking, conveyor belt navigation, or inventory management. If GO-2 delivers, it could accelerate automation in sectors where human labor remains stubbornly necessary due to robotic unreliability.

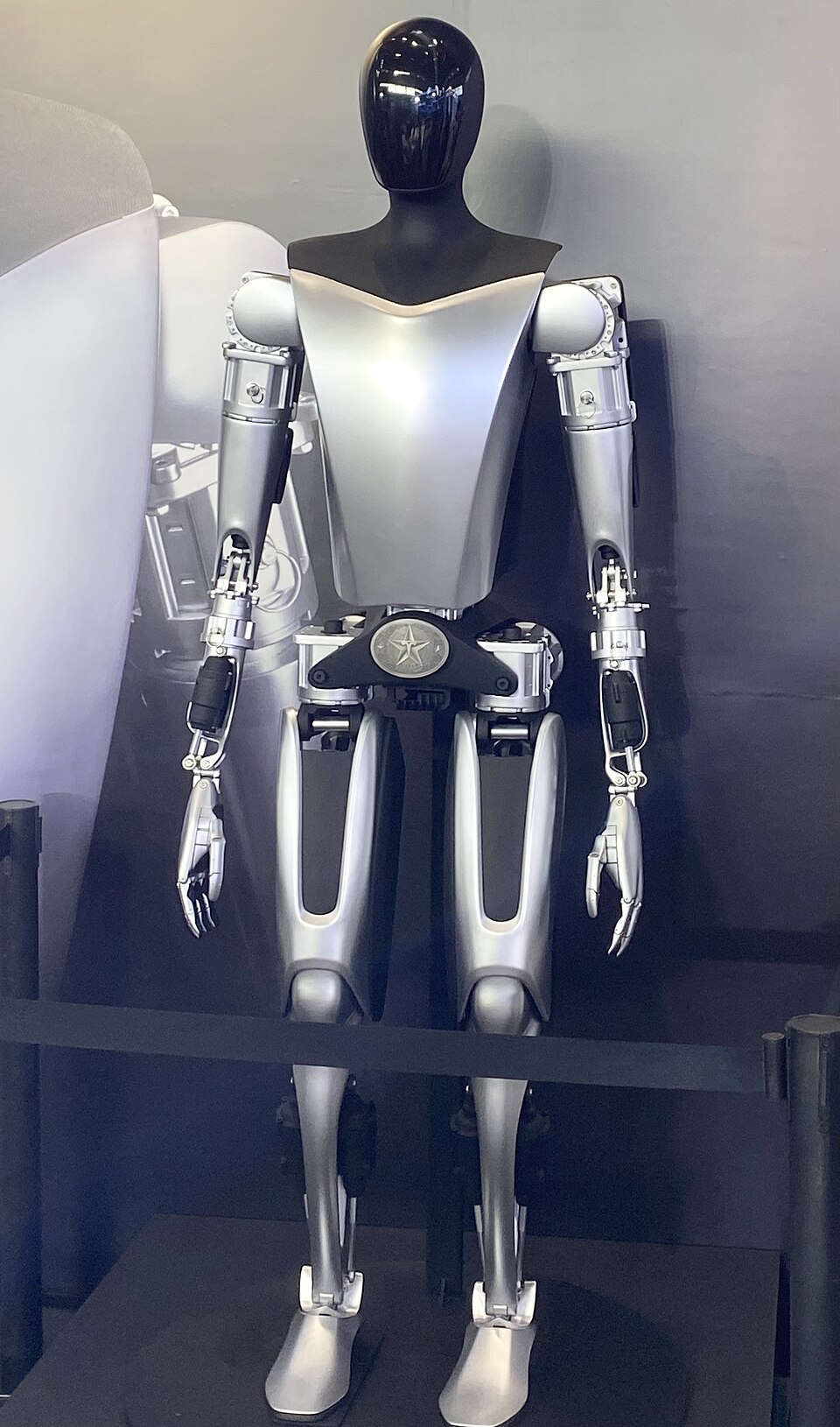

Competitors like NVIDIA, with its Isaac Sim platform, and Tesla, with its Optimus robot, are chasing similar goals but with different approaches. NVIDIA relies on simulation-to-real transfer, while Tesla emphasizes end-to-end hardware-software integration. AGIBOT’s model, by contrast, appears to be a software-first play—one that could appeal to companies already invested in robotic platforms but frustrated by their limitations.

The absence of open-source activity or developer community buzz around GO-2 is telling. Unlike Meta’s Llama or Stability AI’s Stable Diffusion, which sparked immediate experimentation, AGIBOT’s release feels closed-off. There’s no GitHub repo, no technical whitepaper, and no indication of whether the model will be made available to researchers or enterprises. That silence raises questions: Is GO-2 still in a controlled beta, or is AGIBOT simply not interested in community collaboration?

For now, the industry’s reaction has been muted. Without benchmarks or third-party validation, GO-2 remains a promise—one that could either redefine embodied AI or join the graveyard of overhyped robotic demos.