Microsoft’s MAI drops three models—just don’t call it a revolution

Microsoft’s MAI drops three models—just don’t call it a revolution📷 Source: Web

- ★MAI’s new models: voice-to-text, audio gen, image gen—no benchmarks yet

- ★Six months from formation to launch, but integration details remain vague

- ★Community calls them ‘early prototypes’—so where’s the deployment reality?

Microsoft’s MAI (Microsoft AI) group just shipped three foundational models—voice-to-text, audio generation, and image generation—exactly six months after its quiet formation. That’s fast, even for a company racing to outmaneuver OpenAI, Google DeepMind, and Meta. But speed doesn’t equal substance: the announcement lacks performance metrics, release timelines, or even model names.

The voice-to-text model joins a crowded field dominated by Whisper, while the audio and image generators enter battles already fought by Suno and MidJourney. Microsoft’s play here isn’t innovation—it’s integration. Early signals suggest these models will plug into Azure AI and Copilot, turning them into productivity tools rather than standalone marvels.

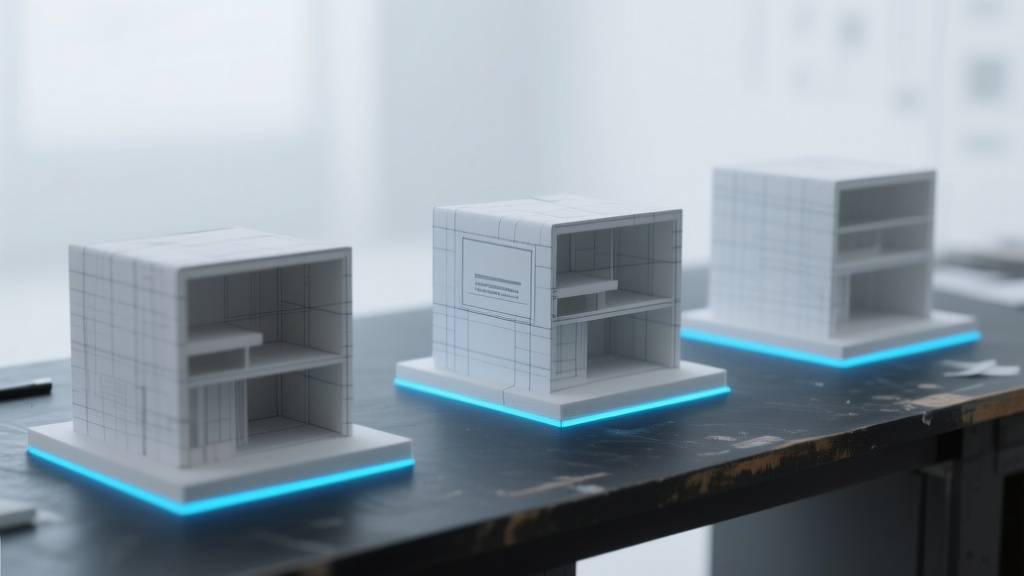

Yet the developer community isn’t holding its breath. Forum chatter frames these as ‘early-stage prototypes,’ with users noting the outputs lack the polish of incumbent tools. That’s the reality gap: demos look slick, but deployment—where latency, accuracy, and scalability matter—remains unproven.

The gap between demo and product widens as Redmond plays catch-up📷 Source: Web

The gap between demo and product widens as Redmond plays catch-up

The real story isn’t the models themselves but the timing. Microsoft is late to the generative AI party, and these releases read like table stakes to stay in the game. The GitHub Copilot datasets and Office 365 interactions could give MAI a training edge—but only if the models escape the ‘internal prototype’ phase.

Competitive pressure is the unspoken driver. Google’s Gemini and Meta’s Llama already dominate attention; Microsoft’s bet is that tight Azure integration will win enterprise customers. But without benchmarks—real-world accuracy, not synthetic tests—the ‘better together’ pitch rings hollow.

For now, MAI’s models are a signal flare: We’re still here. The question isn’t whether they work in a demo, but whether they’ll ship with the reliability of Whisper or the creativity of DALL·E 3. Until then, this is less a breakthrough and more a placeholder.